What is Vespa.ai?

Vespa is an AI search platform (both open-source and enterprise) that lets you build applications that need fast and accurate search, personalized recommendations, and real-time data serving at scale. Vespa isn't just a vector database or just a search engine. It's a complete serving engine where you can combine information retrieval with machine learning or AI-based ranking, re-ranking and filtering, all in one place. Whether you're building a product search engine, a recommendation system, or a retrieval-augmented generation (RAG) application, Vespa gives you the tools to handle everything from small prototypes to systems serving billions of users with millions (or even billions) of documents.

The Problem Vespa Solves

Let's say you want to build a modern search application. The first thing you need is data (documents) and then somewhere to store it. Once you have that figured out, you need to search through these millions of documents, and simple keyword matching isn't going to cut it. A modern search engine needs to understand the meaning behind search queries. This is usually done using embeddings from language models. Your search application also needs to filter results by date, categories and sometimes even user preferences. Those results need to be ranked so the most relevant ones show up at the top. Then you might want a machine learning model (XGBoost, LightGBM) to re-rank the top results based on various document features. And all of this has to happen in a fraction of a second!

Most systems force you to stitch together multiple tools to get all of this working. You might end up using a traditional search engine for text, a vector database to store embeddings, a feature store for user data, and a separate service for running ML models. All these moving parts add latency, complexity and potential points of failure at scale. Keeping everything in sync becomes a challenge of its own.

Vespa takes a different approach. It provides search, vector similarity, structured filtering, and machine learning inference in one single unified platform. Your data lives in Vespa, queries execute on that data, and ranking models run right where the data sits. This minimizes latency, reduces complexity, and lets you build sophisticated systems that actually work.

Where Vespa Comes From

Vespa was born at Yahoo, where engineers faced a fundamental challenge. They needed to serve hundreds of millions of users with search results, recommendations, and ads that were not just relevant but personalized in real time. Traditional search engines couldn't handle the scale. Using separate systems for retrieval and ranking created unacceptable latency. The team needed something that could compute personalized rankings over massive datasets while keeping response times under 100 milliseconds.

The solution was to bring computation to the data rather than moving data to the computation. Vespa was designed so that data and the code that processes it live on the same nodes. When a query comes in, Vespa distributes it to the relevant nodes, each node processes its portion of the data locally, and results get merged together. This architecture made it possible to evaluate complex machine-learned models during query time without sacrificing speed.

Today, Vespa powers applications at Yahoo, AI applications at companies like Spotify (for music recommendations) and Perplexity (for their retrieval-augmented generation system that searches across billions of documents to provide contextual answers).

Core Capabilities

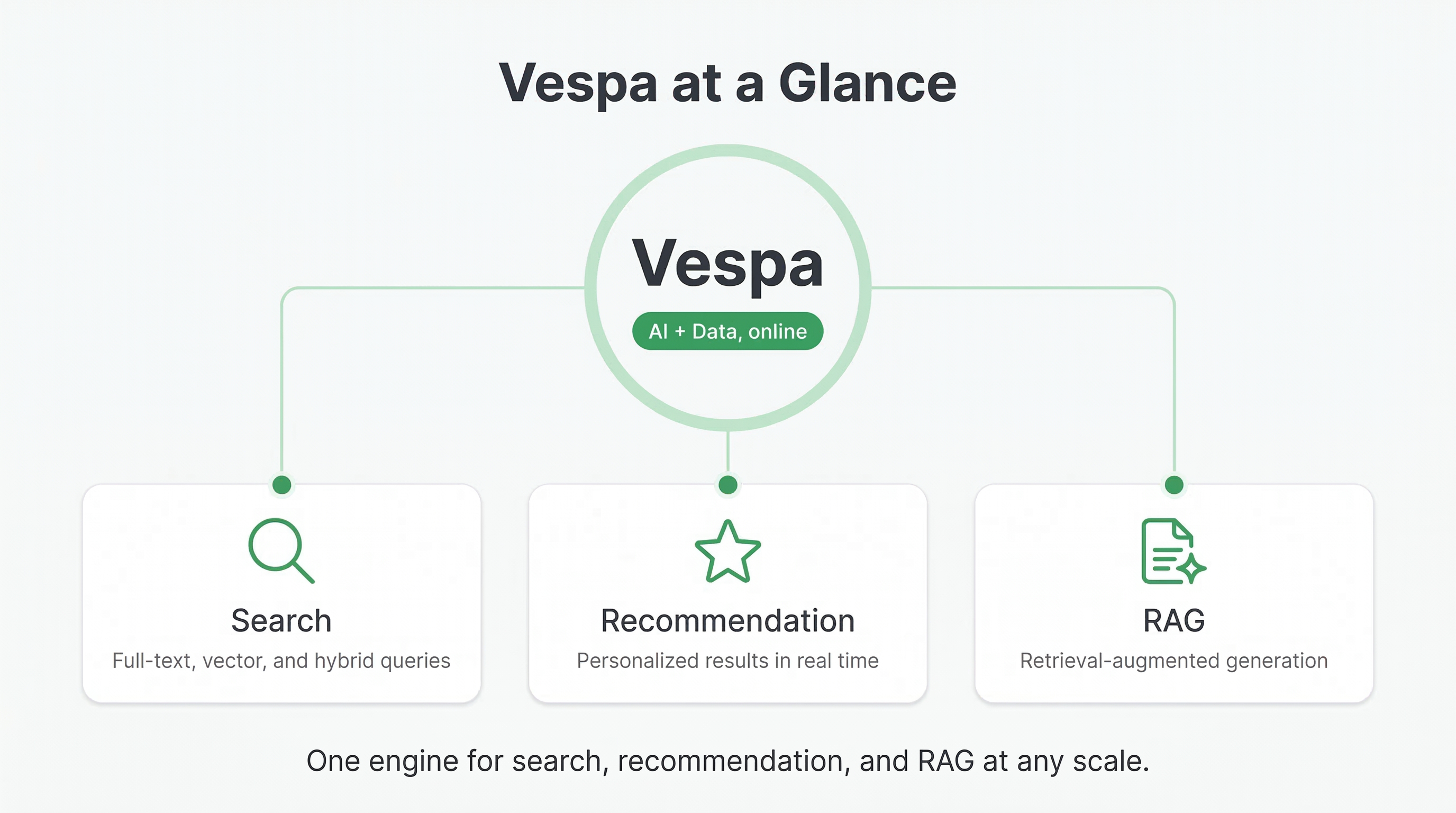

Vespa brings together three critical capabilities that modern AI applications need, and it keeps them working together efficiently.

Search and retrieval across different types of data. Vespa handles full-text search with features like BM25 ranking, linguistic processing, and stemming. It supports vector search for semantic similarity using embeddings from models like OpenAI or Sentence Transformers. More importantly, it lets you combine these approaches in a single query. You can search for keywords, find semantically similar documents using vectors, and filter by structured attributes like dates or categories, all in one operation. This hybrid approach is essential for modern AI applications where you need both precision and recall.

Real-time data serving. When you write a document to Vespa, it becomes searchable within milliseconds. There's no batch indexing process where you sit around waiting hours for new data to show up. You can update individual fields in documents without rewriting the entire document. This makes Vespa a good fit for applications where data changes constantly, like inventory systems, news feeds, or social media platforms. Vespa can handle over 100,000 writes per second per node while continuing to serve queries with low latency.

On-node machine learning inference. You can import models in formats like ONNX, XGBoost, or LightGBM, and Vespa will run them during query processing. This means you can use neural networks, gradient boosted decision trees, or any other model type to rank your search results. Because the models run on the same nodes where your data lives, there's no network round trip to an external service. Vespa also supports multi-phase ranking, where you apply fast functions to all matches first, then use expensive models only on the top candidates. This keeps queries fast even when you're using heavy models.

What Makes Vespa Different

The key difference between Vespa and other systems is that it was designed from the ground up as a unified platform. Many modern architectures try to combine separate tools for vector search, traditional search, and ML serving. They use a vector database for embeddings, a search engine for text, and call out to a model serving platform for ranking. Each boundary between these systems adds latency and complexity.

Vespa gets rid of these boundaries. Everything happens in one system with one query language and one way of thinking about your data. When you write a Vespa query, you can combine text search, vector similarity, structured filters, and ML model execution in a single expression. The system optimizes execution across all these operations together rather than treating them as separate concerns.

This unified approach also means better latency. Vespa can maintain sub-100 millisecond response times even when computing complex ranking functions, running neural network inference, and searching across billions of documents. It achieves this through careful engineering: computation happens in parallel across many nodes, each processing its local data, and results merge efficiently.

Another thing that sets Vespa apart is how it handles ranking. Most search systems let you sort by a score, but Vespa treats ranking as a first-class concern. You can define multi-phase ranking functions that combine text signals, vector similarities, structured data, user context, and machine-learned model scores. You can test different ranking approaches without reindexing your data. This flexibility matters a lot when building AI applications where ranking quality directly impacts the user experience.

Common Use Cases

People use Vespa to build many different types of applications. E-commerce sites use it for product search where they need to combine text matching with filters on price, availability, and categories, then rank results using models that predict purchase likelihood. Content platforms use it for recommendation systems that consider user history, content embeddings, and real-time signals to personalize feeds.

Question answering systems and RAG applications use Vespa to retrieve relevant documents from large collections. When a user asks a question, the application converts it to an embedding, searches Vespa for similar documents, and passes those documents to a language model for generating an answer. Vespa's hybrid search capabilities mean you can combine semantic search with keyword matching and metadata filters to find the most relevant context.

Companies also use Vespa for real-time personalization in ads and content targeting. These applications need to evaluate millions of candidates against user profiles in real time, applying ML models to predict relevance or click-through rates. Vespa's ability to run models at query time makes this possible without having to pre-compute scores for every user-item pair.

How You Can Run Vespa

You have a few options for how to deploy Vespa. For local development and testing, you can run it on your laptop using Docker. The Vespa quick start guide walks you through getting a local instance up and running in minutes. This is great for learning, prototyping, and developing your application.

For production deployments, you can run Vespa on your own infrastructure. Vespa provides container images and packages for major Linux distributions. You control the servers, the network, and the data. This works well when you have specific compliance requirements, need to keep data on-premises, or want full control over costs and configuration.

Or you can use Vespa Cloud, the managed service run by the team that builds Vespa. With Vespa Cloud, you focus on your application while the platform handles infrastructure, scaling, upgrades, and operations. You define your schemas and ranking functions, deploy your application, and Vespa Cloud takes care of the underlying distributed system. A lot of teams go this route because it's the fastest way to production and lets them focus on application logic instead of infrastructure.

Throughout this course, we'll show examples for both local development and cloud deployment. The application code and concepts are the same regardless of where you run Vespa.

What You Will Learn

This course will take you from basic concepts to building production-ready applications on Vespa. You'll learn how to model your data using schemas, how to write queries that combine different search techniques, how to build ranking functions that use machine learning, and how to deploy and operate Vespa applications.

We'll start with fundamental concepts like documents, schemas, and fields. Then we'll move on to different types of queries and how to combine them. You'll learn about ranking in depth, including how to write custom ranking expressions and use machine-learned models. Later modules cover vector search, learning to rank, and domain-specific patterns.

Each module includes practical examples and hands-on exercises. You'll see how real companies use Vespa and learn patterns you can apply to your own projects. By the end, you'll understand not just how to use Vespa's features, but when to use them and why.

Next Steps

In the next section, we'll cover the fundamental building blocks of Vespa applications. You'll learn about documents, schemas, queries, and ranking, and understand how these pieces fit together. We'll look at a simple example application to see how data flows through the system from ingestion to query results.

For detailed technical documentation on all of Vespa's features, check out the official Vespa documentation. This course focuses on teaching you concepts and patterns, while the docs provide comprehensive API references and configuration options.